This creates an interesting starting point: between private use — shaped by a personal need for orientation and a direct user experience — and professional use in day-to-day care, there are not only different requirements, but also very different ideas about what AI should and should not do.

Development and tensions

The interpretation of medical data in healthcare takes place on several levels — from measuring individual laboratory values to making complex treatment decisions. With the rapid development of large language models (LLMs) such as ChatGPT Health and Claude for Healthcare, new tools are emerging that enable highly aggregated interpretation. This creates a tension: on the one hand, LLMs offer a holistic view of scattered health data and provide users with directly usable assessments. On the other hand, they are being compared with traditional, specialised interpretation by medical professionals — especially where context-dependent, rule-based, and technically deep assessment is required.

This article examines the levels of data interpretation, the real-world use of LLMs in healthcare (despite understandable reservations), and why differentiated, multi-level interpretation remains indispensable — especially in laboratory medicine.

How we understand usage: survey versus real behaviour

The discussion around LLMs in medicine is often accompanied by legitimate concerns: data protection, responsibilities, lack of transparency, potential hallucinations, and the question of whether sensitive information belongs in online systems at all.

At the same time, practice reveals a dynamic reality: many people are already using GPT and similar tools — partly officially in pilot contexts, partly informally as a “second pair of eyes” for wording, summaries, or interpretation.

Methodologically, this can be framed from two perspectives:

- Surveys: What do physicians say about how they use AI (or would use it)?

- Real behaviour: What is actually being used, in which situations, and with what patterns?

This second perspective is often especially revealing, because it shows where everyday clinical practice is already making pragmatic decisions today — regardless of whether the debate has been fully settled.

LLMs in healthcare: new tools, new usage contexts

Large language models have entered the healthcare sector in a very short time. Two different directions can be observed:

- End-user-oriented applications (e.g. ChatGPT Health)

These systems are designed to help people understand their health information: explanations of findings, preparation for doctor visits, summaries, and trend analyses. The focus is often on clarity, orientation, and linking different personal data sources. - Professional or B2B-oriented applications (e.g. Claude for Healthcare)

Here, the emphasis is on integration into the processes of providers and payers: documentation support, administrative workflows, structured preparation of information, and privacy-compliant integration into existing systems.

What matters is this: both directions shift interpretation “upward” — away from individual data points and toward aggregated, case-based summaries. This is useful, but it also carries risks when specificity, reference logic, local standards, and diagnostic pathways are not properly taken into account.

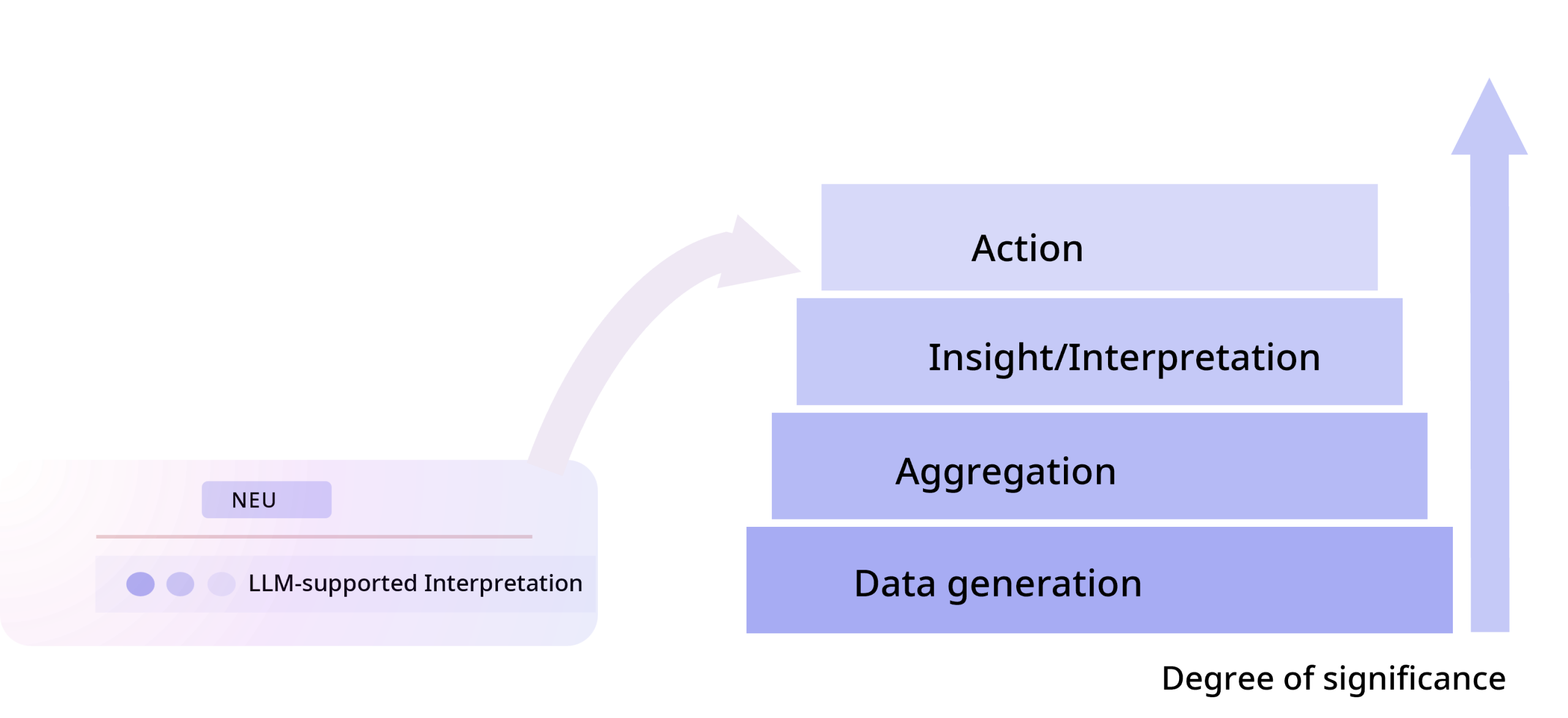

The data stack: from generation to treatment decision

To understand the level at which interpretive systems operate, it helps to look at the “data stack” in healthcare:

As a general principle, the higher up the stack interpretation takes place, the more information is potentially available — for example, when radiology, clinical course, and medication are considered alongside laboratory values. At the same time, the danger increases that specific diagnostic logic becomes “generalised” and local details get lost.

The laboratory as a place of clinical interpretation

Laboratories are often seen as data suppliers — but in practice they are also sites of highly specialised interpretation. Laboratory medicine does not mean measurement alone, but also:

- technical validation (analytics, plausibility, preanalytics)

- contextualised classification (reference intervals, interferences, patterns)

- communication and consultation along clinical questions

- structured report comments and guidance for interpretation

Especially in complex questions, “interpretation in the laboratory” is an important component of diagnostic quality.

Reflex and “Insight to Action”: why workflows are decisive

A central added value arises not only from “better texts,” but from intelligent workflows that translate interpretation into action — and do so in a rule-based, traceable, and reproducible way.

The principle can be described as follows:

- Insight: recognising what the result means in this context (including uncertainties and alternatives)

- Action: deriving what should sensibly happen next (e.g. additional parameters, follow-up monitoring, differentiation testing)

In laboratory medicine in particular, this “insight to action” approach has been established for years — for example through defined diagnostic pathways and reflex strategies. LLMs can complement this by bundling information in an understandable way or structuring clinical contexts. The crucial difference remains: workflows must be locally correct (references, SOPs, indication logic, responsibilities) and must not be replaced by generic, globally averaged answers.

Practical examples: where specialist knowledge is required

There are diagnostic areas in which domain-specific depth is decisive and generic, highly aggregated interpretation typically reaches its limits — especially where reference logic, specialised methodology, or context-dependent algorithms are required:

Cerebrospinal fluid diagnostics (Reiber scheme and oligoclonal bands)

Here, “explanatory lab values” and general statements are rarely enough. The diagnostic significance emerges from patterns, relationships (serum/CSF), methodological particularities, and the interplay of parameters.

Special coagulation diagnostics

Interpretation often depends on specific constellations, mixing studies, clinical context (e.g. anticoagulation), and clear pathways for distinguishing deficiency from inhibitor. It is rule-based — but not trivial.

Therapeutic drug monitoring (TDM)

Drug levels are of limited interpretive value without a time reference, dosage, interactions, and clinical target ranges. The distinction between “abnormal” and “clinically actionable” is especially important.

Genetics and microbiome (as examples of highly complex domains)

Here, interpretations arise from data pipelines, classification logic, and professional standards. The challenge is less “knowledge” and more correct classification under defined rules.

The common denominator is this: the more specialised the question, the more diagnostics becomes a combination of data, rules, context, and experience. This is exactly where reporting at the laboratory or specialist level remains a distinct, non-substitutable source of value — analogous to specialised AI in radiology, which cannot do “everything,” but can be very precise within a clearly defined area.

Conclusion: why differentiated interpretation on multiple levels remains necessary

Large AI language models open up new horizons in medical information processing. They can quickly consolidate enormous amounts of data, create summaries, and enable both patients and professionals to gain a broader perspective on complex issues. But as impressive as this breadth is, it does not automatically replace the diagnostic depth that arises at specialised levels of the system — especially where reference logic, local processes, and domain knowledge make the difference.

Multi-level interpretation — from validating individual measured values to holistic treatment recommendations — remains necessary even in the age of AI. Each of these steps adds value: laboratory analysis provides the reliable data foundation, specialist medical expertise ensures the correct interpretation of the individual puzzle pieces, and LLM-supported aggregation can form an overall picture and assist decision-making. If one level is neglected, the overall result suffers. Only by integrating all levels can it be ensured that patients benefit from interpretations that are both comprehensive and precise. The future of medical data interpretation therefore lies in a hybrid approach: global AI intelligence on the one hand, local medical intelligence on the other — linked through intelligent workflows for the benefit of better diagnostics and therapy.

Three learnings for laboratory medicine in the age of AI

- Domain know-how remains a differentiator — especially in depth

A substantial share of diagnostic value creation arises where specialized logic matters: oligoclonal bands and the Reiber scheme, special coagulation diagnostics, TDM, genetics/microbiome. These areas benefit from AI, but they require domain-specific models, rules, and expertise — not just general text generation. - Anyone who wants to remain relevant in the ecosystem must design data cleanly in both directions

Quality does not arise from “more data,” but from structured, consistent, and contextualised data. Feedback loops are also decisive: only when relevant contextual data (clinical information, previous findings, treatment course) flow back can interpretation become more precise at the right point. - Intelligent workflows connect insight and action

The leverage lies in orchestration: recognising what is possible and meaningful, and deriving the next step from it — ideally through clear, traceable pathways (including reflex strategies and defined responsibilities). LLMs can help, but the workflow must be professionally sound and locally correct.